This can also depend on if you use -no-half -no-half-vae as arguments for Automatic1111. 5 … For FP16, any number with magnitude smaller than 2^(-24) will be equated to zero as it cannot be represented (this is the denormalized limit for FP16). compute-bound vs memory-bound problems) and users should use the tuning guide to remove other bottlenecks in their training scripts. FLOP/s per dollar for FP32 and FP16 performance. 2 Relevant Files … Deepspeed supports the full fp32 and the fp16 mixed precision. So basically when we calculate this circle with FP32 (single precision) vs Fp16. Always using FP64 would be ideal, but it is just too slow. So basically when we calculate this circle with FP32 (single precision) vs … FP16 is important, just flat-out forcing it off seems sub-optimal. … PI would be this exact at different FP standards: Pi in FP64 = 3. It is too big to display, but you can still download it.

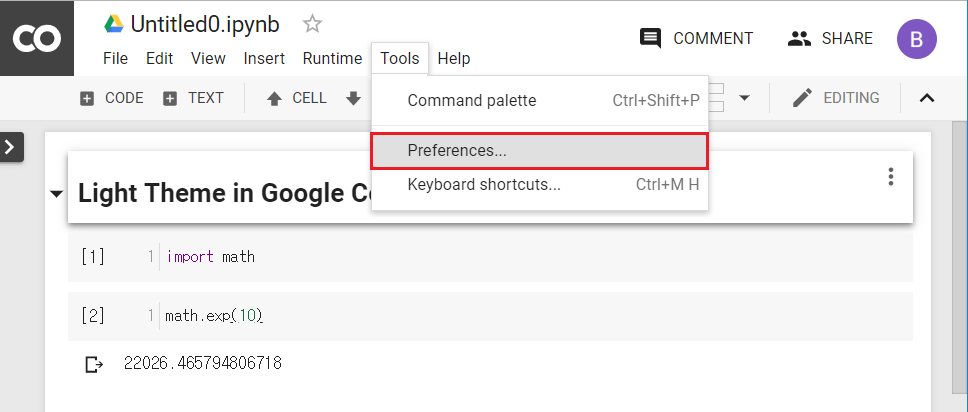

On fp16: comment to enable, in webui-user. This is the index post and specific benchmarks are in their own posts below: fp16 vs bf16 vs tf32 vs fp32.

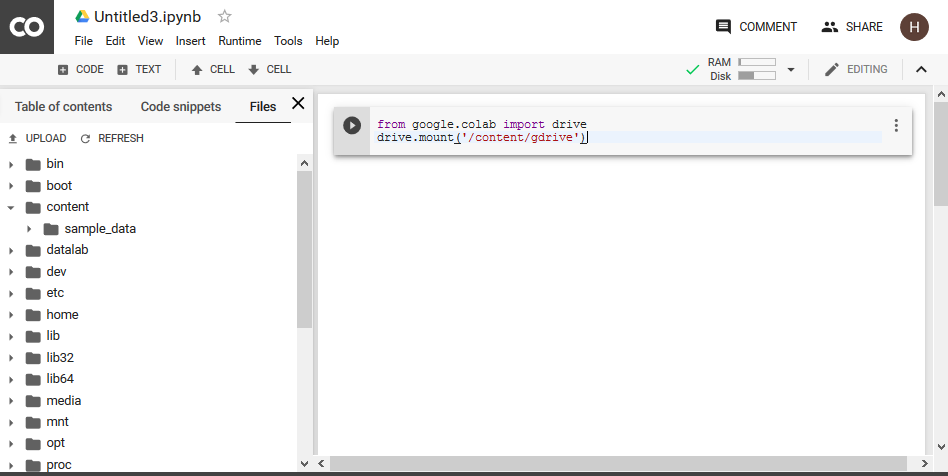

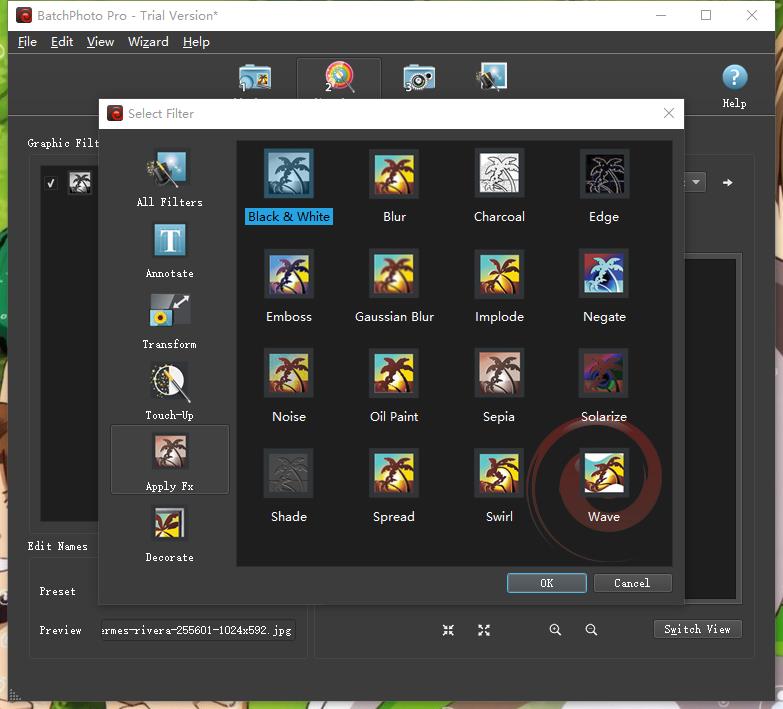

Automatic: User creator of the Automatic1111 web Ui. You can … By default, automatic1111 loads and processes everything as fp16, so you wouldn't notice a difference between a model that is fp16 vs fp32. The biggest limitation to … FP16 is half the size of FP32, so two FP16 values can fit in the same memory space as a single FP32 value. You could have the same seed, same prompt, same everything and likely have near exact same results with each the difference is extra data not relevant to image generation is pruned from the full, and we're left with F16 or F32. Significantly reduced VRAM consumption and size. FP32 (probably just converting on the fly for nearly free), but on SKL / KBL chips you get about double the throughput of FP32 for GPGPU Mandelbrot (note the log-scale on the Mpix/s axis of the chart in that link). 1-v, HuggingFace) at 768x768 resolution and (Stable … Deep Learning with Dell EMC Isilon. This section lists the supported NVIDIA® TensorRT™ features based on which platform and software. We compared FP16 to FP32 performance and used maxed batch sizes for each GPU. Prompt search is also possible, and it can be used as a direct linkable uploader. The best news is there is a CPU Only setting for people who don't have enough VRAM to run Dreambooth on their GPU. (I changed output type using amp autocast (float 16 float32) ) I wonder if there is a difference in performance when applied in other tasks.

(source: NVIDIA Blog) While fp16 and fp32 have been around for quite some time, bf16 and tf32 are only available on the Ampere architecture GPUS and TPUs support bf16 as well. UmairJavaid (Umair Javaid) June 10, 2021, 2:01pm 5. What is it all about FP16, FP32 in Python? My potential Business Partner and I are building a Deep Learning Setup for working with time series. Stable Diffusion WebUI from AUTOMATIC1111 has proven to be a powerful tool for generating high-quality images using the Diffusion model. BF16 has as 8 bits in exponent like FP32, meaning it can approximately encode as big numbers as FP32. We find that the price-performance doubling time in FP16 was 2.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed